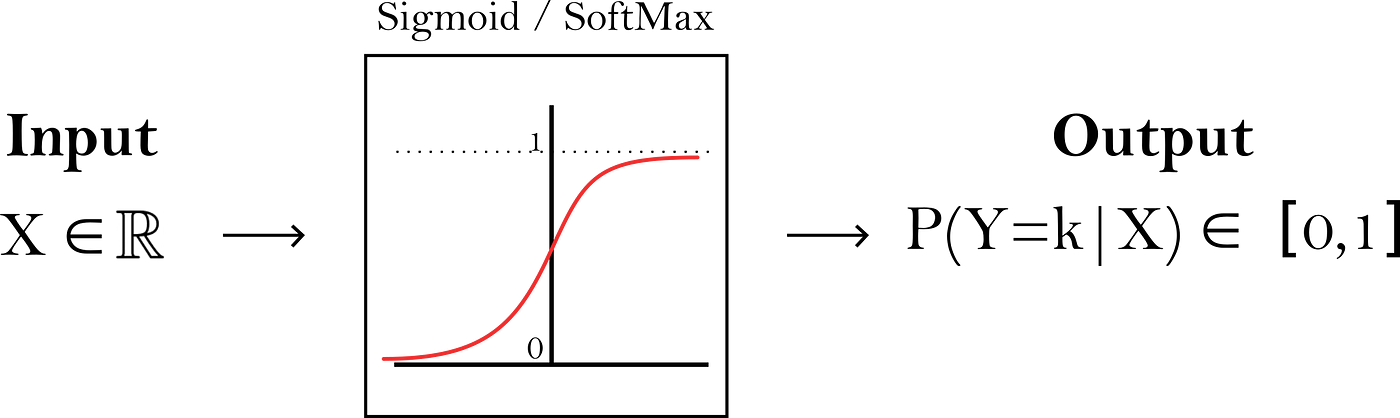

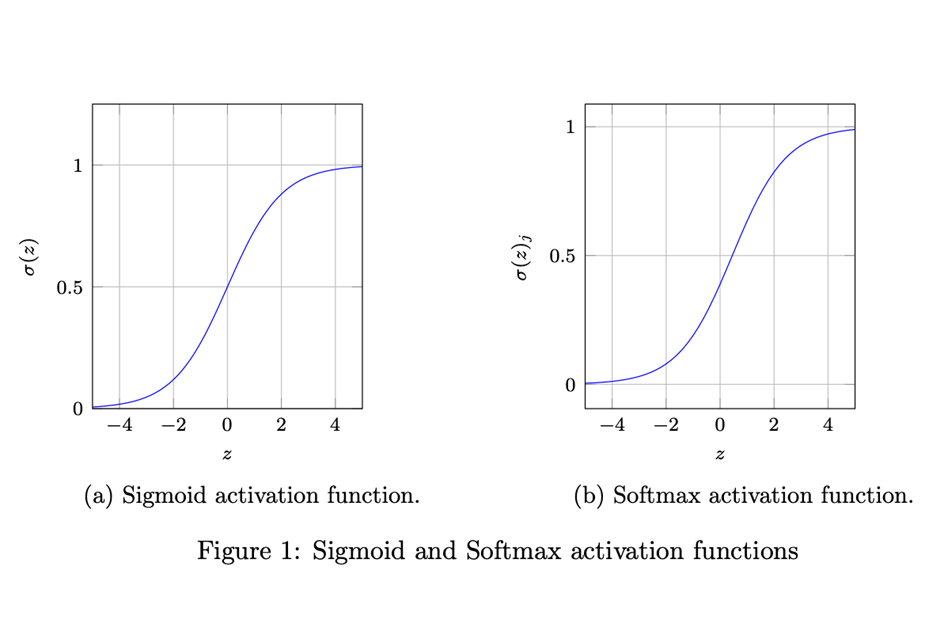

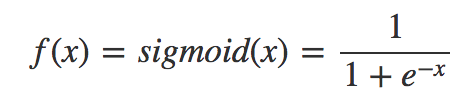

Sigmoid and SoftMax Functions in 5 minutes | by Gabriel Furnieles | Sep, 2022 | Towards Data Science

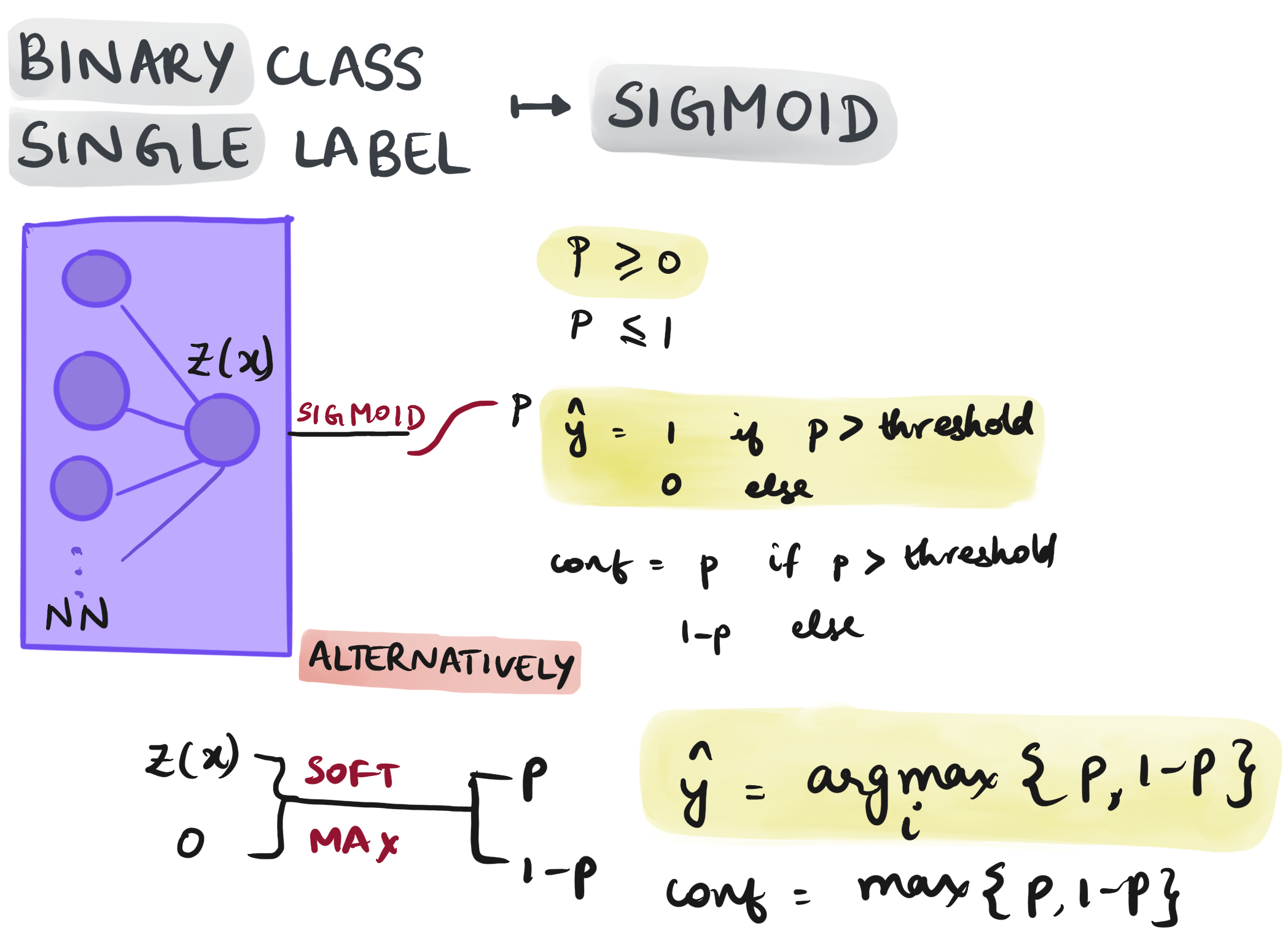

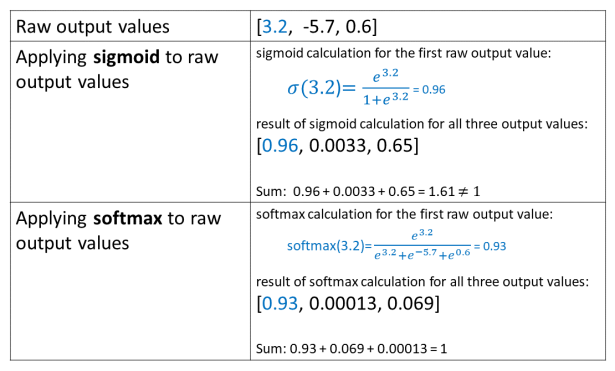

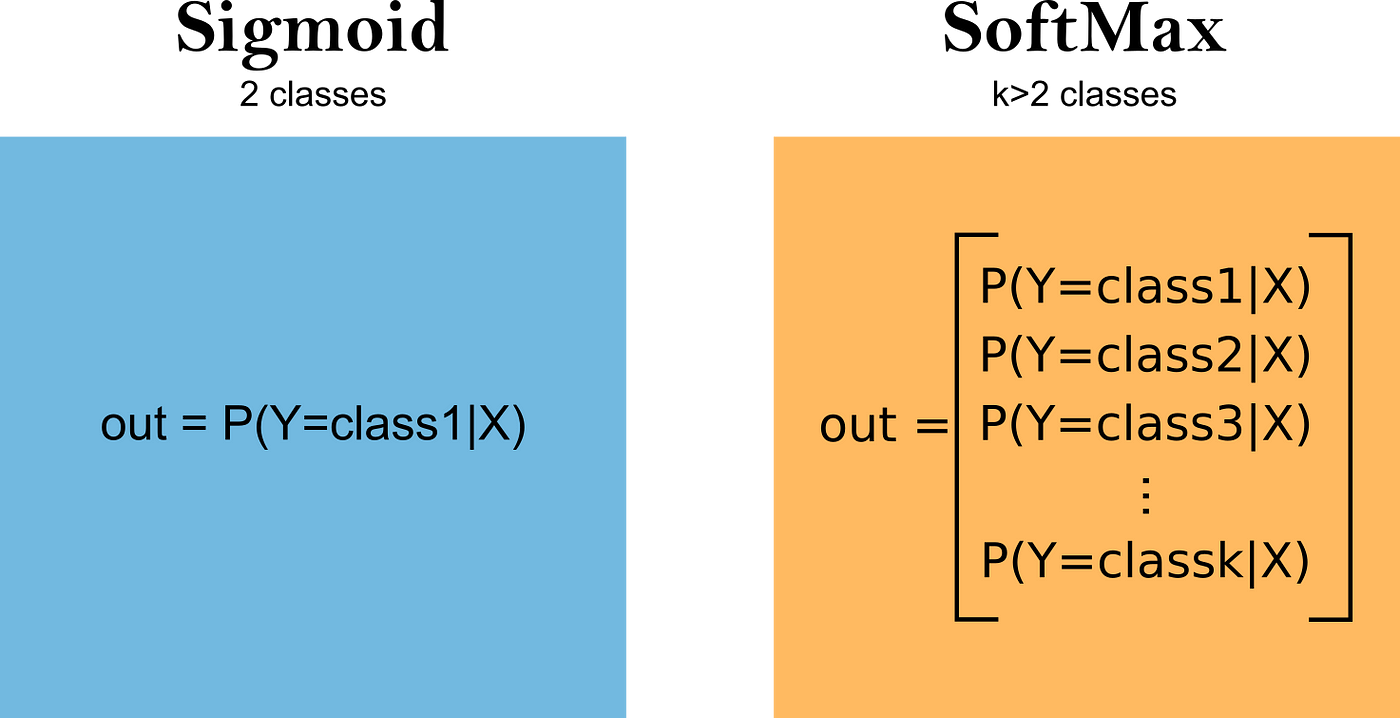

The Differences between Sigmoid and Softmax Activation Functions | by Nikola Basta | Arteos AI | Medium

Why is the derivative of sigmoid nonlinearity often implemented as x(1-x)? The derivative of sigmoid(x) is defined as sigmoid(x)*(1-sigmoid(x)). - Quora

The Differences between Sigmoid and Softmax Activation Functions | by Nikola Basta | Arteos AI | Medium

The Differences between Sigmoid and Softmax Activation Functions | by Nikola Basta | Arteos AI | Medium

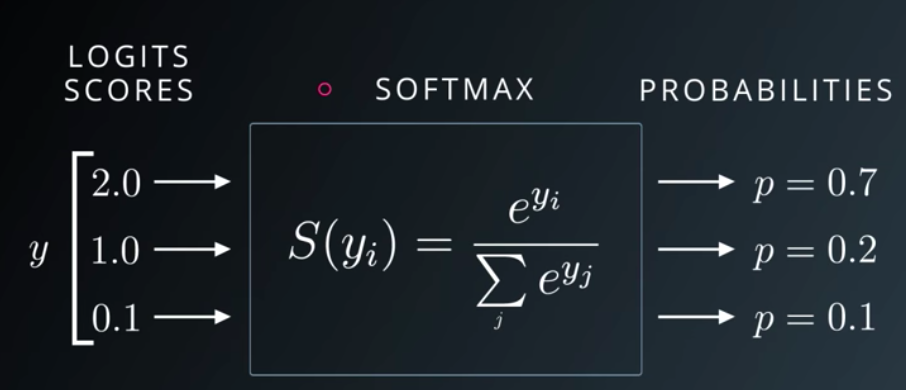

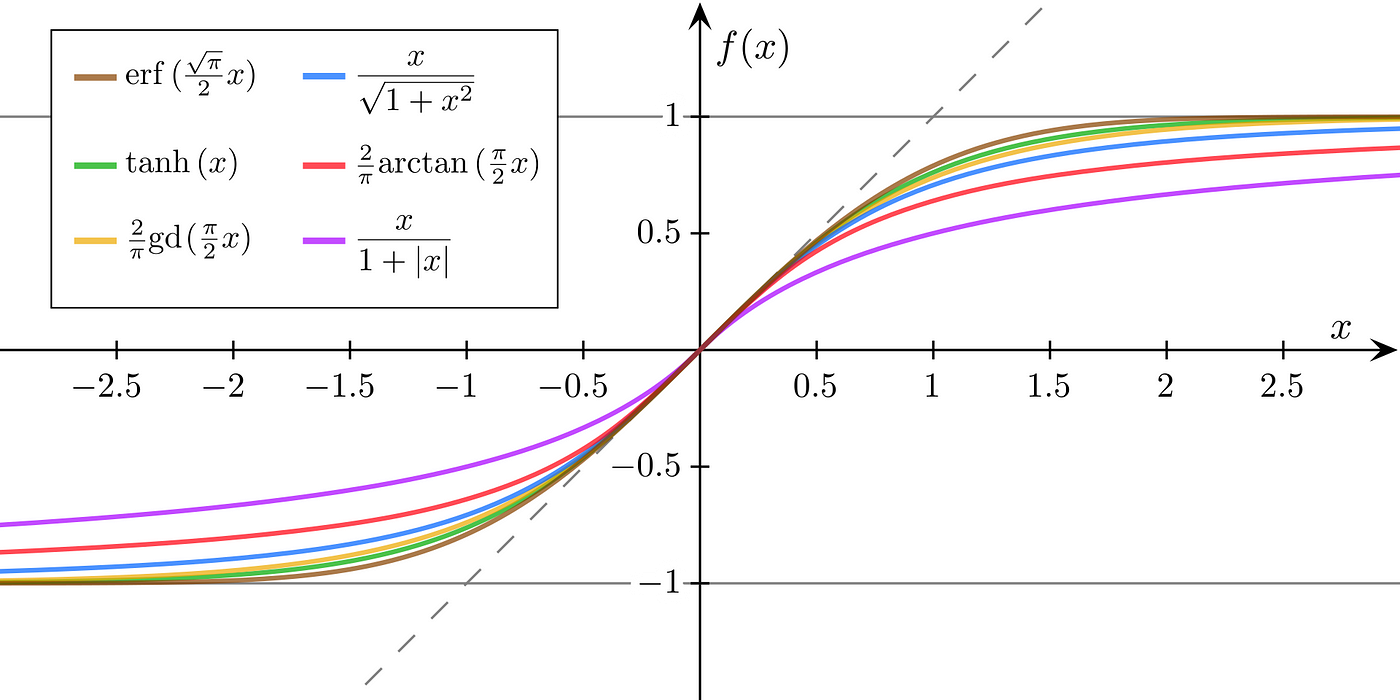

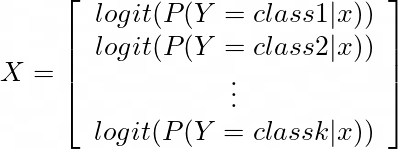

Understanding Sigmoid, Logistic, Softmax Functions, and Cross-Entropy Loss (Log Loss) in Classification Problems | by Zhou (Joe) Xu | Towards Data Science

Sigmoid and SoftMax Functions in 5 minutes | by Gabriel Furnieles | Sep, 2022 | Towards Data Science

neural networks - Softmax in last layer - error rises but when using sigmoid error decreases - Cross Validated

Sigmoid and SoftMax Functions in 5 minutes | by Gabriel Furnieles | Sep, 2022 | Towards Data Science